And as the user drops new operators onto the canvas they can specify dependencies through an intuitive click and drag interaction. A palette focused on the most commonly used operators on the left, and a context sensitive configuration panel on the right. The user is presented with a blank canvas with click & drop operators. It was critical to make the interactions as intuitive as possible to avoid slowing down the flow of the user. The “Editor” is where all the authoring operations take place - a central interface to quickly sequence together your pipelines.

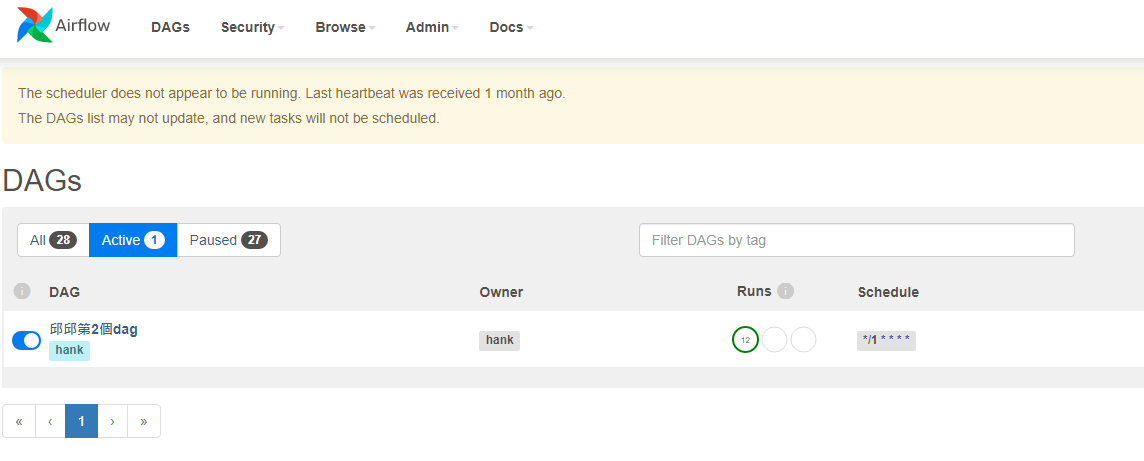

And once the pipeline has been developed through the UI, users can deploy and manage these data pipeline jobs like other CDE applications thru the API/CLI/UI.įigure 1: “Editor” screen for authoring Airflow pipelines, with operators (left), canvas (middle), and context sensitive configuration panel (right) More advanced users can still continue to deploy their own customer Airflow DAGs as before, or use the Pipeline authoring UI to bootstrap their projects for further customization (as we describe later the pipeline engine generates Airflow code which can be used as starting to meet more complex scenarios). With CDE Pipeline authoring UI, any CDE user irrespective of their level of Airflow expertise can create multi-step pipelines with a combination of out-of-the-box operators (CDEOperator, CDWOperator, BashOperator, PythonOperator). This laid the foundation for some of the key design principles we applied to our authoring experience. But a lot of times when we looked across Airflow DAGs we noticed similar patterns, where the majority of the operations were identical except for a series of configurations like table names and directories – the 80/20 rule clearly at play. Making the most commonly used as readily available as possible is critical to reduce development friction.Īirflow DAGs are a great way to isolate pipelines and monitor them independently, making it more operationally friendly for DE teams. Anyway to minimize coding and manual configuration will dramatically streamline the development process.Īlthough Airflow offers 100s of operators, users tend to use only a subset of them. Writing code is error prone and requires trial and error. In the process several key themes emerged:īy far the biggest barrier for new users is creating custom Airflow DAGs. We started out by interviewing customers to understand where the most friction exists in their pipeline development workflows today.

We wanted to hide those complexities from users, making multi-step pipeline development as self-service as possible and providing an easier path to developing, deploying, and operationalizing true end-to-end data pipelines. This presented challenges for users in building more complex multi-step pipelines that are typical of DE workflows. Until now, the setup of such pipelines still required knowledge of Airflow and the associated python configurations. That’s why we are excited to announce the next evolutionary step on this modernization journey by lowering the barrier even further for data practitioners looking for flexible pipeline orchestration - introducing CDE’s completely new pipeline authoring UI for Airflow. As we mentioned before, instead of relying on one custom monolithic process, customers can develop modular data transformation steps that are more reusable and easier to debug, which can then be orchestrated with glueing logic at the level of the pipeline. This combined with Cloudera Data Engineering’s (CDE) first-class job management APIs and centralized monitoring is delivering new value for modernizing enterprises. Today, customers have deployed 100s of Airflow DAGs in production performing various data transformation and preparation tasks, with differing levels of complexity. Airflow has been adopted by many Cloudera Data Platform (CDP) customers in the public cloud as the next generation orchestration service to setup and operationalize complex data pipelines.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed